Data-Center Performance on a Battery. We build Brain-Inspired Spiking Foundational Models that run locally, efficiently, and privately.

Modern AI is hitting a wall. Power-hungry Transformers are trapped in the cloud, consuming massive energy and causing the Edge Bottleneck.

Unsustainable power draw makes global scaling impossible and environmentally taxing.

Disconnected devices remain "dim" - unable to process complex vision without cloud access.

Forced dependence on 5G/Fiber creates critical failure points in autonomous missions.

| Feature | Traditional AI | Aneuro (Spiking) |

|---|---|---|

| Visual |

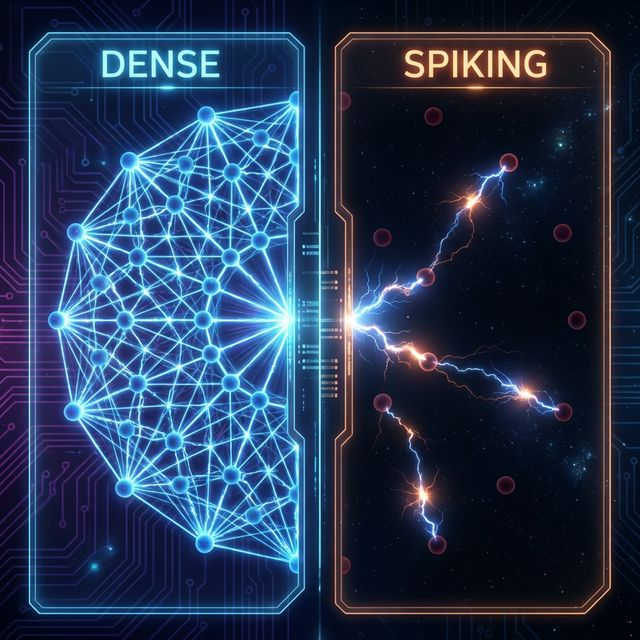

Dense Grid

i

Heavy Math: Every layer performs billions of

float multiplications per second, regardless of data content.

|

Sparse Spark

i

Pure Signal: Only active spikes trigger

computation. No activity = No energy consumption.

|

| Math |

Multiplication (A × B)

i

(A × B): Power-hungry floating-point ops

require complex ALU units in

chips.

|

Accumulate (A + B)

i

(A + B): Simple binary addition. Reduces

silicon footprint and heat generation by 90%.

|

| Neuron |

Continuous Floats

i

Information is a high-precision decimal (e.g. 0.823).

Requires constant memory traffic.

|

Discrete Spikes

i

Information is a binary pulse (0 or 1). Mimics

biological neuron firing.

|

| Activity |

Always On

i

The Sleep Factor: Looking at a blank wall costs

the same energy as a crowd. Multipliers never rest.

|

Event-Driven

i

The Sleep Factor: Zero change = Zero spikes.

The chip sleeps when idle, waking only for signal.

|

| Energy |

10 Joules

i

Metric representing high-performance GPU energy consumption for a

single inference.

|

0.1 Joules

i

Dramatic 100x efficiency gain by eliminating idle

cycles and complex math.

|

| Speed |

Frame-Based

i

Waiting for the next clock cycle or video frame (e.g.

60Hz) creates bottleneck.

|

Spike-Based

i

Asynchronous processing. Information travels at the

speed of the spike pulse.

|

| Hardware |

GPU Clusters

i

Requires expensive cloud infrastructure or data-center

cooling systems.

|

Neuromorphic

i

Native fit for chips like Intel Loihi or BrainChip

Akida. Runs on ultra-low voltage.

|

We've replaced matrix multiplications with sparse, binary spike computations. Our 0.8B model outperforms models 10x its size on industry benchmarks like MMLU-Pro Massive Multitask Language Understanding Pro: A rigorous benchmark evaluating reasoning and knowledge across 14,000+ tasks. .

Traditional vision models process frames. Aneuro processes Events.

By moving to Event-Based

Spiking ViTs

Spiking Vision Transformers: A bio-inspired version of

the Transformer architecture that uses temporal spikes instead of dense pixel

frames.

, we achieve microsecond latency - enabling reflex

motor control for drones, robots, and autonomous vehicles.

Latency-Free Reflexes: Using MLSA Multi-Head Latent Spiking Attention: Our proprietary attention mechanism that combines spiking data with latent compression for zero-latency processing. , we eliminate frame-scan waits, enabling microsecond response times for complex motor control.

Event-Driven Dynamics: Pure additive math (Aneuro MLA Multi-Head Latent Attention: Our advanced compression technique that optimizes attention metadata to save memory without losing context. + SNN Spiking Neural Networks: The third generation of neural networks, designed to mimic biological neuron activity via discrete spikes. ) enables active on-demand efficiency, delivering massive throughput without the thermal/power spike of dense ViTs Vision Transformers: A deep learning model that applies the transformer architecture—originally designed for text—to process visual data in chunks or 'patches'. .

Threat-Active Edge: Our Spiking MoE Spiking Mixture of Experts: A sparse architecture where only a subset of specific experts fire in response to signal, drastically reducing power draw. activates only when threats are detected, ensuring efficient local reasoning on ultra-low power.

Memory Efficiency: Latent compression reduces KV cache Key-Value Cache: The memory used to store past conversation context. Our compression reduces this memory footprint by 90%. by 90%, enabling full-scale foundation models on small-sat hardware.

Strategic licensing for Robotics, Defense, and Aerospace OEMs. Our spiking FOUNDATION models integrate directly into next-gen industrial silicon.

Native integration in Premium Consumer Hardware. Bringing ultra-efficient, private "Local Minds" to phones, tablets, and home bots.

We are live with a functional API and Control Panel. We are seeking strategic partners and pre-seed capital to scale our multi-modal spiking ecosystem.